A/B Testing in Marketing: The Best Practices Revealed

After being in marketing for some time, you understand there are too many factors affecting performance. CTA placements, imagery, and even font sizes can make a huge difference for your campaigns.

Moreover, the way these factors are combined might bring you to success or ruin the setup you’ve been building so carefully.

That’s why building any marketing campaigns from scratch is always a challenge (nonetheless, an interesting challenge).

You need to build the whole setup on assumptions, trying to figure out the best message, perfect timing, and even the ideal audience.

In fact, the most interesting part starts when everything is ready. It’s time to test. You start adjusting one thing at a time and seeing how this one small change affects campaign performance.

Once you learn the answer, you change one more element. The process might take forever since it’s never enough when it comes to performance optimization.

This is how A/B testing works.

Now, let’s dive deeper into the essence of the process, discover ideas for A/B testing, and go through the best practices for running effective tests.

- What is A/B testing?

- Validating your ideas

- A/B testing in marketing

- A/B testing in advertising

- How to do A/B testing

- Tools for A/B testing

What is A/B testing?

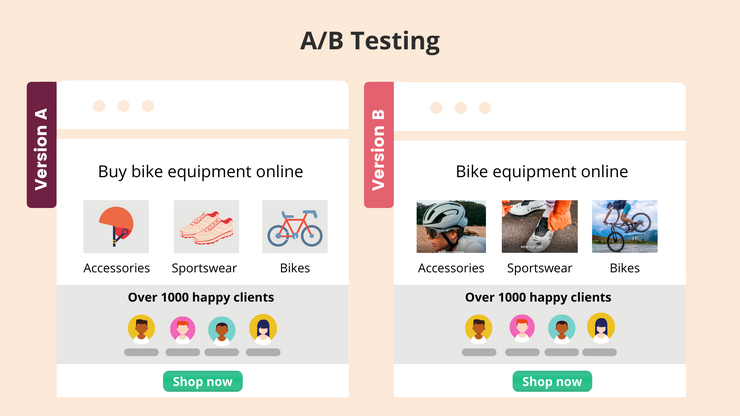

A/B testing is a part of the conversion rate optimization (CRO) process, which involves analyzing reactions of similar audiences on two versions of the same content, where only one variable is different.

It’s critical that you switch one variable at a time so that you can analyze the effect of the exact change, not a set of factors.

For more precise results, two versions should be shown to the exact same audience and in the same time frames.

It’s also important that you give your A/B tests enough time to run. When testing advertising campaigns, it’s best to run ads for a few weeks. However, the ideal time frame for a test will depend on the campaign budget.

Validating your ideas

Even A/B testing implies some level of risk. It’ll inevitably take some time (and money) before you find a perfect blend of messaging, audience, and channels.

Fortunately, there’s a way to reduce the risk of wasting your budget on the wrong message.

You can see how your audience reacts to your ideas before investing in a large-scale campaign with the help of Message Tester.

All you need is to type in your message and specify your target audience. In 3-4 working days, you’ll receive the data on audience engagement.

On-Demand Video Course On Native Advertising

Boost your ROAS with native ads. Enroll now with our limited 30% discount.

A/B testing in marketing

A/B testing is the best way to optimize your marketing campaigns for conversions.

The results of continuous testing will give you a better understanding of your audience’s behavior, help to make informed decisions regarding your future initiatives, lead to higher engagement rates, and more.

What can you test?

A/B testing can be applied to any type of campaign you can think of. Here are the most common subjects for testing.

Email campaigns

How to increase the open rates and click-through rates of your email campaigns? The answer is: you need to A/B test.

In the context of email, A/B testing is the method of sending two variations of a campaign to two subsets of your subscribers in order to figure out which variation delivers the best results.

Say, you aren’t sure what time of the day is best for launching an email campaign. Typically, you’ve been sending a newsletter at 11 am. However, you assume a campaign sent at 1 pm would drive better open rates. By sending identical emails to 2 subsets of your list at 11 am and 1 pm, you’ll identify the best time to launch email campaigns.

Here are a few more A/B testing ideas for email marketing campaigns:

Subject lines. There are hundreds of types of subject lines.

Is it better to induce the reader’s curiosity or go straight to the point in your headlines?

You’ll never know until you test. Compare different subject lines against each other to see what messaging appeals to your audience the most.

Visuals. Should you use images in your marketing emails? While using images is considered an effective technique, visuals can be distracting oftentimes.

Discover whether your audience prefers visual content or plain texts by A/B testing including images and excluding them.

Content length. In order to improve click-through rates, it’s best to keep to short copy.

Does the rule work for your company? Just test short copy against long copy to figure out.

CTAs. A CTA button drives attention to the action needed from a user.

The more topics you cover in your email content, the more CTAs you’ll want to include. The problem is that multiple CTAs might distract a user who will finally interact with none of the links.

At the same time, by limiting your content to only one subject, you appeal to a way narrower audience. What’s the solution?

The only way to learn what’s the optimal number of topics and CTAs in your email campaigns is to A/B test.

Social media campaigns

While A/B testing email marketing campaigns is quite common, it seems to be an overlooked practice in social media marketing.

If you don’t want to guess about a perfect posting frequency and content length, test the following elements across every social network you use:

Texts. LinkedIn posts on company accounts can be up to 700 characters long, which allows you to be as detailed as you want. But should you use this opportunity? Define the best length for social media posts by sharing the same content introduced in different ways.

Visual content. Videos or images? Infographics or photos? Maybe no visuals at all? Test and see.

Slides. We’ve seen slides perform very well for driving engagement to the company’s LinkedIn account.

Does it mean they’re good for everyone? Definitely not. But it doesn’t hurt to experiment.

Times to post. When is your audience most active online? What days are the best in terms of generating social media engagement?

It often happens that companies have target audiences in different time zones. Without A/B testing publishing in different hours, you won’t learn what’s the best time to share content with your very unique audience.

Link placements. Have you noticed that many companies and thought leaders share links to content they talk about in their posts in the comments? Many marketers believe that this simple practice helps to boost engagement.

There’s no official proof to the point, but we recommend that you run a few tests to see whether the practice works for you.

Website design

It’s critical to keep optimizing your website to ensure more intuitive navigation which results in higher conversions.

It’s always exciting to see how small tweaks in website design can affect dwell time, bounce rates, and other user behavior metrics.

Below are the elements you might want to test first thing.

Navigation. Choosing from various types of website navigation isn’t a simple task.

The fewer links you have in the menu, the more attention your product pages will receive. How do you place all the options in a way they don’t distract qualified buyers and don’t freak out visitors who aren’t ready to purchase yet?

Group pages in several categories and test different nesting levels to identify the most intuitive navigation.

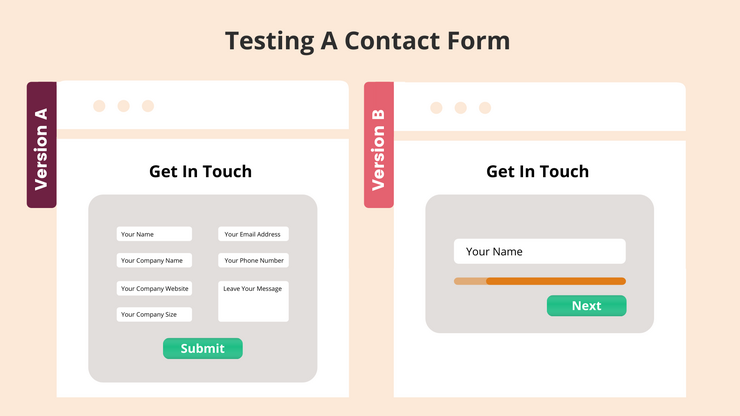

Contact form. There are so many things to test within one contact form it can take months before you determine the best format.

Change the number of form fields, test using open-ended questions against closed-ended ones, compare one-page forms to asking one question per page, etc. – but also remember to make one change at a time.

Banners. Should you place banners promoting lead magnets or other assets in the sidebar, in-article, or above the fold?

Test each placement one by one first and then start combining elements to see whether it helps to increase conversion rates without hurting the user experience.

Landing pages. Like contact forms, product pages are subject to a wide range of various tests. Here you can A/B test headlines, copy, CTAs, embedded forms, and many more components that are critical to your conversion rates.

A/B testing in paid online advertising

Without A/B testing, you have little chance to build successful online advertising campaigns.

It takes time to build an ad that resonates with the relevant audience and reaches your targets. However, even after you manage to develop a successful campaign, you’ll need to be ready to test new approaches soon since even great ads become exhausted one day.

What can you test?

When you think of testing your ads, you’ll realize that there are too many things that should be tested. And when the budget is limited, you might be tempted to test several things at a time – just to speed up the process.

This is one of the biggest mistakes you can make in online advertising. Such tests won’t give you any insights except for the understanding that completely different ads deliver different results, which is hardly surprising.

Therefore, it’s best to A/B test your ads to avoid unreliable results.

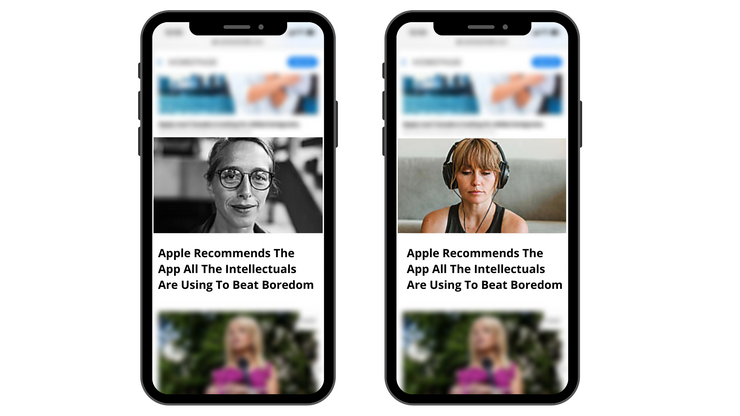

Thumbnails

Unless you’re running a PPC campaign, an image is the first thing that catches a user’s eye (or not).

During your tests, you might want to discover the results of the following manipulations:

- Comparing black and white thumbnails against color images.

- Including text in your images.

- Showing happy people in thumbnails.

- Using images that depict animals.

There are many more hypotheses to test, but we recommend that you start with the above-mentioned ideas.

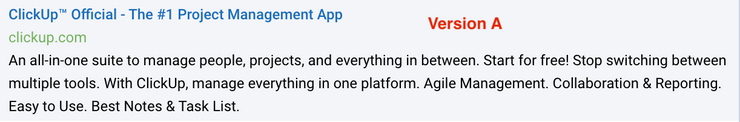

Ad copy

98% of ads are a waste of money because advertisers fail to match their message to the audience’s intent.

There’s no use in catchy thumbnails and high-converting landing pages if your ad headline doesn’t appeal to the right people.

Things to test within your ad copy:

- Power words. Will your ads perform better or worse if you use words that evoke emotion?

- CTAs. Should you add a call to action to the headline? What’s a perfect CTA?

- Transparency. Does mentioning the price of the product help to improve conversion rates of the campaign?

- Urgency. Are users more likely to click on your ad and make a purchase if you create a sense of urgency in your ad copy?

Testing ad copy will not only help you to figure out what’s the best messaging for the specific campaign but even give you an idea of what approaches will work well for your website, emails, and other marketing assets.

Landing pages

Minor changes to your landing pages can have a huge impact on lead quality and conversions.

On-Demand Video Course On Native Advertising

Boost your ROAS with native ads. Enroll now with our limited 30% discount.

Just like with any other element of your paid campaigns, there are tons of things to test on your way to creating a high-converting landing page:

- A headline. What type of headline speaks to your audience? It’s also important that it’s aligned with the ad headline.

- A lead capture form. How many fields should a form include so that it doesn’t dissuade users from making it to the ‘Submit’ button?

- Content format. Should you promote a product page or share a case study?

- Images. What kind of images works the best? Happy customers? Or maybe illustrations of your product in use?

- Page layout. What goes first: testimonials or product descriptions?

Target audiences

No matter how carefully you work on creatives, there’s still one more aspect that can either boost or ruin your campaign performance. Right, the large part of the campaign success depends on whether you manage to reach the right people or not.

Here are the most common targeting options to toggle on your way to finding the best audience for your campaigns:

- Countries.

- Interests.

- Age ranges.

- Different actions as criteria for retargeting.

How to do A/B testing

After you choose elements you want to A/B test, you need a plan.

How do you set up and perform tests that deliver accurate data?

Set a goal

First of all, you need to define what you’re planning to reach.

Do you want to increase conversions? Is it CPA that you want to reduce? Or maybe you’re aiming at improving lead quality?

Be specific with your goals and choose a primary metric you’ll be measuring. How should this metric change when your A/B test ends?

Have a ‘control’ version

Before you build a variation, you need to document the results the initial version (or ‘A’ version) has delivered.

Try not to change or pause the control version during the A/B test. If you want to test an ad, you’ll need to keep using the current version while testing a new variation. This way, you’ll make sure two ads receive (almost) equal portions of traffic in a specific time frame.

Create hypotheses

What change(s) will help you reach the goals you’ve defined in the first step? Your answer to this question is called a hypothesis.

Think of the reasons that might be hurting your campaign performance, have a look at how your current approach is different from your competitors, look through the statistics, and you’ll be able to come up with a few solutions to your conversion problem.

Use these solutions as hypotheses for your upcoming A/B tests.

Prioritize your hypotheses

It’s likely that you have a list of hypotheses now. But you can’t test each of them right away.

Score your hypotheses based on their possible effect on your KPIs. Although the results will be quite subjective, it’s the best way to prioritize tests without piling adjustments on top of each other.

Define your timeline and budget

How much should you allocate on the test? How long does it take before you can say your test has reached statistical significance?

These questions are strongly related. The more traffic you get, the faster you’ll collect enough data. If your campaign daily budget is low, it’ll take a few days of weeks to get significant results.

Create variations and launch tests

It can be tempting to edit variations whilst the test is running.

A new campaign might be underperforming in the first days, but doesn’t necessarily indicate the outcome you’ll see in a week. Stay patient.

Analyze results

Remember you had to select the key metric to focus on? Now it’s time to take it and determine whether the results are significant enough.

Fortunately, there are a few A/B testing calculators that help to evaluate the results from marketing experiments:

- A/B Testing Significance Calculator by Neil Patel

- A/B Testing Calculator by SurveyMonkey

- A/B Test Sample Size Calculator by Optimizely

When you’ve got the green light, you can pick a winner. If a tested version shows better results, deploy it and disable the original version. If not, document the insights and proceed to other hypotheses.

Tools for A/B testing

Keeping track of all your tests and processing results might take all your resources without leaving any capacity for producing new variations. But with an A/B testing tool, you can structure your campaigns effectively and reach more accurate conclusions without investing much time in the process.

Ad platforms, like Facebook and Google, typically offer tools for A/B testing, and therefore we’ll highlight platforms that facilitate website conversion rate optimization and email A/B testing:

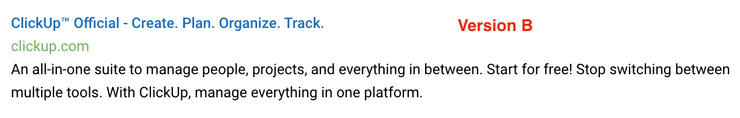

VWO

VWO is one of the most popular experimental platforms for running A/B tests on websites, applications, and products.

The tool delivers deep insights into user behavior, creates heatmaps, session recordings, and collects all the data in the analytics dashboard.

For your convenience, VWO provides a point-and-click editor for creating new variations of landing pages.

Optimizely

Optimizely is an A/B testing and CRO tool offering a wide range of products. The platform enables you to run advanced experiments on your website, mobile applications, messaging platforms, etc.

With their experimentation tool, you can run multiple tests on one page simultaneously.

Optimizely also offers a package for developers willing to collaborate on product experiments and identify the best solutions.

Convertize

While being the most affordable tool on the list, Convertize isn’t inferior to the above-mentioned platforms.

The tool allows you to run code-free website experiments and provides all the insights needed to make informed decisions.

Mailchimp

Mailchimp is a great solution for email marketers willing to A/B test their campaigns effortlessly. The platform segments your audience, sends up to 3 variations of your campaign, and selects a winner based on the metrics you’ve specified.

With the multivariate testing feature, you can test up to eight email variations at a time.

Summing up

A/B testing allows you to skyrocket the effectiveness of your marketing campaigns, from paid ads to newsletters. And there’s no need to reinvent the wheel. Just have a look at one of your existing assets, think of one thing that could be improved, and launch a test.

On-Demand Video Course On Native Advertising

Boost your ROAS with native ads. Enroll now with our limited 30% discount.

Feed

Feed